Blog Archives

Red Gate Backup Restore of the Master Database (Part 8)

One of the errors I encountered with the Red Gate Backup Pro tools discussed extensively this month, was in running the DBCC CHECKDB command on a temporarily restored backup. It gave errors about the consistency which did not appear in the actual master database. The following are some interesting blog articles in response to this issue:

- Response from Red Gate.

- Response from Paul Randal and Kimberly Tripp on SQL Server Pro magazine.

Basically you will need to do the master database separately. I run an integrity check weekly against the system databases. It is a good idea to follow the recommendations from Red Gate listed in the link above. Enjoy!

Red Gate Backup Pro Continued (Part 7)

If you have been keeping score at home, I have been trying to work out the issues of implementing the Red Gate SQL Backup Pro and Virtual Restore. This series may sound like a bunch of complaints, they are not. I am just working through the issues and hoping to help others struggling and hopefully improve the product if possible. If some of these items are easily fixed, I could not find the information easily on their website otherwise I would not have posted it. Or it could be quite possibly that I am just an idiot.

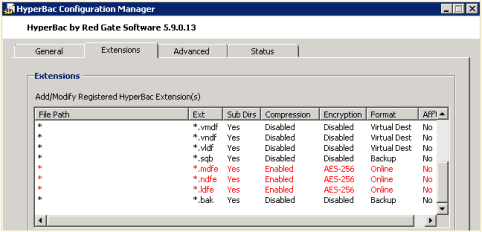

I was able to resolve one issue from yesterday post, the weekly regular SQL Server copy-only backup. By tweaking the HyperBac Configuration Manager settings for the BAK extension and disabling HyperBac compression (see Figure 1) we were able to get default SQL Server backups running once a week in the midst of using SQL Server Backup Pro for our daily backups.

We also noticed a weird error in our SQL Monitor tool when using the Virtual Restore (see Figure 2). The drive is only 300 GB where we store our Virtual Restores. It looks like the Virtual Restores raise a drive space error based upon the “actual” size of the restore instead of the virtual size.

Just in case you are wondering, I compiled my long list of quirks and submitted it to Red Gate support. I am confident that they will be able to help as they have been excellent in the past. Personally, I just like to figure things out before I talk with support, which many probably find as a character flaw. Maybe it is male ego or pride but I like to solve problems without help if at all possible. On the other hand, I am never afraid to ask for help, I just want to make sure I have tried everything before hand. Enjoy.

Red Gate Backup Pro Update After A Weekend of Jobs (Part 6)

Last week I blogged about my experiences implementing the Red Gate Backup Pro and Virtual Restore. Today I have a few more observations and items that I need to investigate.

- We setup a weekly copy-only backup using default SQL Server compression to give my supervisor ‘warm and fuzzies’ over switching to a proprietary backup format. We noticed this weekend that the servers with the HyperBac/Virtual Restore tools installed failed on a regular backup. When we manually stopped the HyperBac service and ran the backup, it completed successfully. When we re-enabled the service the backup failed again. We decided to forgo using HyperBac, I may try deactivating that part of the license and see if that alleviates the issue.

- Some of our restores fail when we script them and run fine when we run them manually.

- Some of the restores report DBCC CHECKDB issues and when we run DBCC CHECKDB on the actual database there are no issues.

- I would like to script the real Virtual Restore product, but as of yet have been unable to.

I will keep everyone posted as to my progress. Enjoy!

Red Gate Backup Pro Continued (Part 5)

Continuing in my series on the Red Gate Backup Pro 7.2 utility for SQL Server, I tried yesterday rather unsuccessfully to set up a development server to do all of my virtual restores in order to verify all of my backups for my production servers. It works great on this particular development server for the backups on this particular server. However, the ability to do this across servers is complicated with a complex array of directions with advanced security options that really obfuscates a simple idea. Can it be done, Red Gate says yes with their directions provided on their website amongst them this link and this link in addition to their security model.

Maybe I have missed something, I am not sure. But it looks to me that the security is far more advanced than it needs to be for the idea of a ‘virtual restore’ and I do not want a simple AD account to have so many privileges. Maybe I will step back and approach it again after the holidays.

However, if that falls through I will just set this up on each production server. I am also trying to script all of these virtual restores into a single script that grabs all of the databases instead of hard coding each individual database in the script. This will automate my maintenance such that when new databases are created, I will not have to worry about setting them up in the restore script. I have some ideas but have not been able to get them to work yet. Press on and enjoy!

Some Additional Findings on Red Gate Backups (Part 4)

I just wanted to point out a couple of things I noticed after a weekend of Red Gate SQL Backup Pro compressed backups and virtual restores on our development servers:

- The <database_name>_Restored database generates a few SQL Monitor alerts for all of the newly restored temporary databases that are eventually dropped after the DBCC CHECKDB is run against them. On our system we got up to five notifications per database:

- Database file size increased (Custom metric)

- Rate of write transaction/sec increased (Custom metric)

- Custom metric collection error

- Page verification

- Database unavailable

- When using a plain jane AD account to install and run the backups, you need to go into each individual database and add that user to the ‘db_backupoperator’ database role. This is not necessarily Red Gate’s fault as SQL Server does not have this at the instance level (I still do not understand why, but maybe I am just an idiot), however the Red Gate tool maybe should have warned me about this at some point instead of cryptic error message on failure, in my opinion. The Red Gate security model states that the account needs to be part of the sysadmin fixed server role which goes against best practices (if you go further down it does give a workaround like I suggested but I didn’t make it that far the first time I read the page, nor the second).

- There was a few servers that I had difficulty installing the agent onto unless I used an admin account even though they had the ‘log on as a service’ and sysadmin role on the regular account as well as permissions on all of the folders. I could then switch the service back to that account after installation and the service starts fine. They might have been missing logon locally. I will try that on the next server to test it.

I will keep this thread running as it appears there are some other issues. Enjoy!

Finalizing SQL Backup Assessment (Part 3)

After testing HyperBac yesterday in our SQL Backup tools series throughout this week, we realized that SQL Backup Pro is the way to go. The HyperBac compressed backups were not smaller than either SQL Server native compression or SQL Backup Pro. However, all three integrated well with our Ola Hallengren maintenance scripts. We also decided to do one native backup a week for disaster recovery purposes in case we cannot get the tool to work following a disaster. With a proprietary tool, my manager felt better in a DR scenario having at least one native backup, so ‘we gotta do what we gotta do’ to keep everyone happy.

I have recommended to Red Gate that they add more automation into the scheduling of Virtual Restore jobs to grab all of the databases in the job instead of individual jobs for each database. That I think is the only area I found to be lacking in the tool.

Enjoy your weekend.

Update on Backup Tool Testing (Part 2)

After a day of testing, we were able to integrate our Ola Hallengren solution with the Red Gate SQL Backup Pro v7.2 turning a SQL compressed backup on one database from 1.2 GB to 700 MB using compression level 4. This is a nice reduction for us with storage space being at a premium. Today we will be testing the SQL HyperBac solution.

All that was needed for Ola’s script was to add the following:

@Compress = ‘Y’,

@BackupSoftware = ‘SQLBACKUP’,

@CompressionLevel = 4

For HyperBac the settings would be:

@Compress = ‘Y’,

@BackupSoftware = ‘HYPERBAC’

Enjoy!

Backup Tool Testing Begins (Part 1)

Today we are beginning our testing phase for comparing the default SQL Server backup solution to the Red Gate HyperBac and SQL Backup Pro 7. We have already purchased Red Gate, but simply have not had the time to implement this tool. We did some initial testing, but it has been over a year and there is a newer version available now. We are curious how well this integrates with our ultimate maintenance plan by Ola Hallengren. Any input?

Miscellaneous Backup Notes

This week I have been teaching my understudy about backups and restores. Here are some important things we went over this week:

- Checking “Verify backup when finished” does not ensure that the backup is good.

- Checking “Perform checksum before writing to media” does not ensure the backup is good.

- Checking both 1 and 2 also does not ensure that the backup is good.

- Checking both 1 and 2 and performing a RESTORY VERIFYONLY does not ensure that the backup is good.

- The only way to ensure without a shadow of a doubt that a backup is good is using 1 and 2 and then restoring it somewhere else and running a DBCC CHECKDB on the database.

- Unless you are regularly restoring your backups you do not have a backup solution.

- If you switch from simple recovery mode to full recovery mode then you need to kick off a backup to start the chain.

- In full recovery mode (or bulk-logged recovery) you must backup the transaction log regularly enough to keep the log from filling up. This will truncate the log and allow you to provide point in time recovery. Mileage may vary with how often to perform this backup depending upon the size and requirements of your database.

- When using full recovery mode and you need an ad hoc backup make sure that you check the “Copy-only backup” so that you do not disrupt the backup chain by moving that backup to another location. You will screw up your recovery options at that point.

- In the full recovery model, using differential backups can reduce the number of log backups that you have to restore.

Enjoy!

Corruption and Verifying Backups

This morning I had a conversation with a Subject Matter Expert and Application Administrator who asked me quite simply for a verified backup before he does an install on Saturday. My response was that I could test it manually for them and I was willing to do so but currently with our infrastructure the nightly backups are not tested without manual intervention. I have tried to change this policy for some time and unfortunately it may not occur until something bad happens. With this in mind, I do the best I can to minimize the risk given that I cannot automate a solution to restore databases on a regular basis as I would prefer. How can you minimize the risk?

- Use the WITH CHECKSUM option on your backup scripts. Read Paul Randall’s The Importance of Validating Backups article on SQL Server Central for more information.

- Use the RESTORE VERIFYONLY command (although we use Ola Hallengren’s Backup Solution which is the verify option which runs the command).

- Use the WITH CHECKSUM on the RESTORE command to recheck the page checksums in the backup as well as the backup file.

- Automate or manually restore your databases from scratch on a regular basis. (This is where we are lacking and where I want to take my servers).

- Once you have restored, then run DBCC CHECKDB against the restored database.

If you are not doing all five then you cannot say confidently that your backups are verified. However, if you are doing all five keep in mind that there is no fool proof guarantee against corruption, this merely minimizes the destruction by having viable backups. I hope this helps….Enjoy!