Category Archives: Troubleshooting

Weird Problem with SSAS Not Starting

After building a new cluster to replace a 2005 cluster here in the office, I discovered Analysis Services would not start. Usually I do not put Analysis Services on the same box however one of our vendors requires SSRS, SSAS, and the Database Engine to be on the same instance. Frustrating, I know.

When I installed the cluster, everything was operational. However after I applied service pack two to the 2008 R2 Enterprise nodes, Analysis Services would not start. I didn’t notice the first time that it was after the service pack was applied until I removed everything and started over fresh.

The logs were not much help and I could not find much on the internet until I eventually found a friend who suggested that the service running SSAS needed to be in the local administrators group. Problem solved. Now my lesson learned is here for you as well. Enjoy!

Red Gate Virtual Restore Scripting (Part 10)

If you have been following along since December, then you know that I have been doing some investigation with the Red Gate Backup and Restore bundle to implement in our system with our use of Ola Hallengren’s backup maintenance scripts. One of our last stumbling blocks has been automating and scripting of the Red Gate Virtual Restore product. We have moved methodically through the list and with the help of Red Gate’s excellent support department resolved each item including this one.

The problem we had been that when we tried to verify the backup through restoration and DBCC CHECKDB on a certain database, that was considerably larger than all of the other databases on this server, we would not have enough space on the drive using our restore script we created last week. That scripting solution is great as long as there is enough space on the drives to attach the database files from backup and then drop them.

The beauty of the Virtual Restore product, in case you did not already know, is that it can restore these files with a much smaller footprint than the actual database files take up in the exiting format. However, the problem is that it is strictly a wizard at this point that will grab a specific backup and run the restore manually and then drop it once it checks the integrity and consistency. This is a great product but this is feature should be included or else it does not help much especially when you have hundreds of databases.

We ran the wizard and then viewed the script:

RESTORE DATABASE [TestDB_Virtual] FROM

DISK=N’I:\TestDB\FULL\SQLPROD40_TestDB_FULL_20130101_213217.sqb’

WITH MOVE N’TestDB_Data’ TO N’F:\VirtualRestore\TestDB_Data_TestDB_Virtual.vmdf’,

MOVE N’TestDB_Index’ TO N’F:\VirtualRestore\TestDB_idx_TestDB_Virtual.vmdf’,

MOVE N’TestDB_Log’ TO N’F:\VirtualRestore\TestDB_Log_TestDB_Virtual.vldf’, NORECOVERY, STATS=1,REPLACE

GORESTORE DATABASE [TestDB_Virtual] WITH RECOVERY, RESTRICTED_USER

GODBCC CHECKDB ([TestDB_Virtual])

GODROP DATABASE [TestDB_Virtual]

GO

This script did not work when we ran it via T-SQL because of a lack of disk space which is the same problem we encountered using the Backup Pro restore script, however it did work with the Red Gate Virtual Restore Wizard. We contacted support to find out why there was a difference on the same box. Basically SQL Server does not know that the HyperBac service is running in the background. The wizard is smart enough to check your disk space and if you do not have enough it temporarily adds an entry into the HyperBac configuration that tricks SQL Server into thinking that you have the right amount of disk space in order to complete the task.

The parameter is “VirtualDiskSize=” where the value is an integer to represent the disk size in megabytes. You add the parameter to the bottom of the to the hyper.conf file found in the C:\Program Files (x86)\Red Gate\HyperBac\Bin folder. At this point then you restart the HyperBac service and the problem is solved! Next I will try to automate this script….stay tuned!

Red Gate Backup Restore of the Master Database (Part 8)

One of the errors I encountered with the Red Gate Backup Pro tools discussed extensively this month, was in running the DBCC CHECKDB command on a temporarily restored backup. It gave errors about the consistency which did not appear in the actual master database. The following are some interesting blog articles in response to this issue:

- Response from Red Gate.

- Response from Paul Randal and Kimberly Tripp on SQL Server Pro magazine.

Basically you will need to do the master database separately. I run an integrity check weekly against the system databases. It is a good idea to follow the recommendations from Red Gate listed in the link above. Enjoy!

Red Gate Backup Pro Continued (Part 7)

If you have been keeping score at home, I have been trying to work out the issues of implementing the Red Gate SQL Backup Pro and Virtual Restore. This series may sound like a bunch of complaints, they are not. I am just working through the issues and hoping to help others struggling and hopefully improve the product if possible. If some of these items are easily fixed, I could not find the information easily on their website otherwise I would not have posted it. Or it could be quite possibly that I am just an idiot.

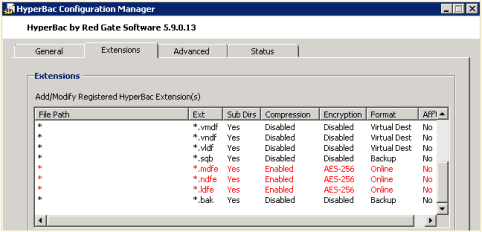

I was able to resolve one issue from yesterday post, the weekly regular SQL Server copy-only backup. By tweaking the HyperBac Configuration Manager settings for the BAK extension and disabling HyperBac compression (see Figure 1) we were able to get default SQL Server backups running once a week in the midst of using SQL Server Backup Pro for our daily backups.

We also noticed a weird error in our SQL Monitor tool when using the Virtual Restore (see Figure 2). The drive is only 300 GB where we store our Virtual Restores. It looks like the Virtual Restores raise a drive space error based upon the “actual” size of the restore instead of the virtual size.

Just in case you are wondering, I compiled my long list of quirks and submitted it to Red Gate support. I am confident that they will be able to help as they have been excellent in the past. Personally, I just like to figure things out before I talk with support, which many probably find as a character flaw. Maybe it is male ego or pride but I like to solve problems without help if at all possible. On the other hand, I am never afraid to ask for help, I just want to make sure I have tried everything before hand. Enjoy.

MAP Toolkit Licensing Continued

Continuing yesterday’s post regarding the licensing issues I discovered using the MAP Toolkit, I was able to resolve the issue without much difficulty. The license was showing in the MAP Toolkit as developer edition. We have volume licensing so I am sure now that wrong installer was selected at the time of install because we do not use keys as they are populated by the installer.

To correct the problem, we ran the installer on the server effected and selected ‘Edition Upgrade’ and selected the media. When prompted for a product key, we had to fool the installer with some trickeration. On a separate VM, we installed SQL Server using our Enterprise media which generated a key code in the box at the same point in the installation. We then typed that key into our real installation and proceeded with the ‘Edition Update’ thus allowing the installer to realize that we were licensed for a different version. This successfully updated our edition to Enterprise as this was the license purchased for this server.

However, after stopping and restarting SQL Server to get the new version to register we noticed that the SQL Agent was failing with no errors noted in the SQL error log or the Agent log. We ran the ‘Repair’ from the installation center (shown in figure 1) to make sure that there was no corruption with the previous edition. That did not resolve the problem. When running the agent from the command line we got a different error that pointed us to the login being incorrect. Somehow running the Edition Update locked out the AD account which is used only by this service on this server so a user could not have been to blame (or should not be able to). Once unlocking that account, SQL Server Enterprise Edition was running smoothly! Enjoy!

Windows 8

Setting up a windows 8 Lenovo laptop for my wife today. She forgot the password and now we are recovering the machine. There was never an option to setup the USB recovery setup when we created the password. Weird because she used the same password she always used and it had her hint in there. This reminds me of the fact that passwords are useless because I just recovered the whole machine on reboot. There could’ve been an email reset because the password itself is not that secure so why bother? I think security is so far behind in terms of evolution. Will someone just put the retina scanners or voice recognition on here and lets be done with it? Enjoy!

Deadlock Graphs

Last night I learned about graphical deadlock graphs from Fargham Butt of Microsoft. I had simply used the traceflag and then read the error logs to find the information. Thus today I thought I would write a blog about the subject and decided to do some research first when I found an excellent blog post by Jonathan Kehayias (B|T) covering Handling Deadlocks in SQL Server. Jonathon is a Microsoft Certified Master and does a much better job than I can do explaining the whole subject, so I thought it more prudent to just refer you to his blog. Give it a whirl and enjoy!

Using the Right Tool

My week has been spent deep diving with the OpNet AppInternals Xpert and AppResponse console to learn some more valuable troubleshooting skills for our servers and applications that we administer including SQL Server and Oracle.

Using OpNet, I have been able to resolve three major headaches for my team this week working with their engineer. I am reminded of the simple fact that sometimes the ability to do your job well consists of having the proper tools to perform the job. With the cost of these tools sometimes you have to be able to justify the need but this one pays for itself many times over and that says a lot because it is pricey.

Fail-Safe Operator

Everyone needs a backup plan including the SQL Server notifications. If something goes awry with the notifications or the msdb database is unreachable, then the fail-safe operator will ensure that mail is delivered.

Select properties on the SQL Server agent (from the object explorer) in order to enable it for your instance. Enjoy!

Beginning OpNet Monitoring

Today’s blog will be brief as I am in the middle of a weeklong process of learning the OpNet AppInternals xPert Monitoring and Transaction Trace Warehouse. I am excited to see how well this tool can interact with our SQL Servers as well as Oracle servers, dotNet application servers, and our Java based enterprise content management system.

We are in the configuration side today with the rest of the week set for troubleshooting various issues we have in our production environment. I will keep you posted as I learn this product. So far, it seems pretty powerful. Enjoy!